TL;DR:

- Modern manufacturing uses real-time, predictive quality monitoring instead of reactive inspections.

- Continuous monitoring improves efficiency by reducing waste, rework, and increasing throughput.

- Implementing robust measurement systems and industry benchmarks like DPMO and sigma levels is essential.

Quality monitoring is often treated as a final checkpoint rather than a continuous discipline woven into every stage of production. That view is costly. Real-time monitoring in manufacturing is now recognised as a continuous process-quality discipline, not a post-production audit. Modern systems detect process drift as it happens, prevent defects before they propagate, and generate the data that drives strategic improvement. This article walks you through how quality monitoring has evolved, what measurable benefits it delivers, how to implement it effectively, which benchmarks matter, and where the field is heading next.

| Point | Details |

|---|---|

| Shift to real-time monitoring | Modern systems detect and prevent quality issues before defects arise. |

| Data fuels efficiency gains | Process analytics and multi-stage monitoring raise yield and reduce waste. |

| Effective implementation is key | Reliable measurement, environment control, and traceability underpin successful monitoring. |

| Benchmark with sigma metrics | Using DPMO and sigma levels drives continuous improvement and high performance. |

For much of manufacturing’s history, quality was something you checked at the end. A batch of parts came off the line, an inspector measured a sample, and the decision was made: pass or fail. This approach caught visible defects, but it did nothing to prevent them. By the time a failure appeared at final inspection, hundreds of non-conforming parts had already been produced.

That model is now obsolete. Integrated sensors, connected machinery, and machine learning have shifted production quality monitoring from a reactive checkpoint to a continuous, predictive function. This shift is often described as ‘Quality 4.0’, and it moves monitoring towards predictive real-time analytics well beyond the reach of traditional tools like Statistical Process Control (SPC) or Six Sigma defect counting.

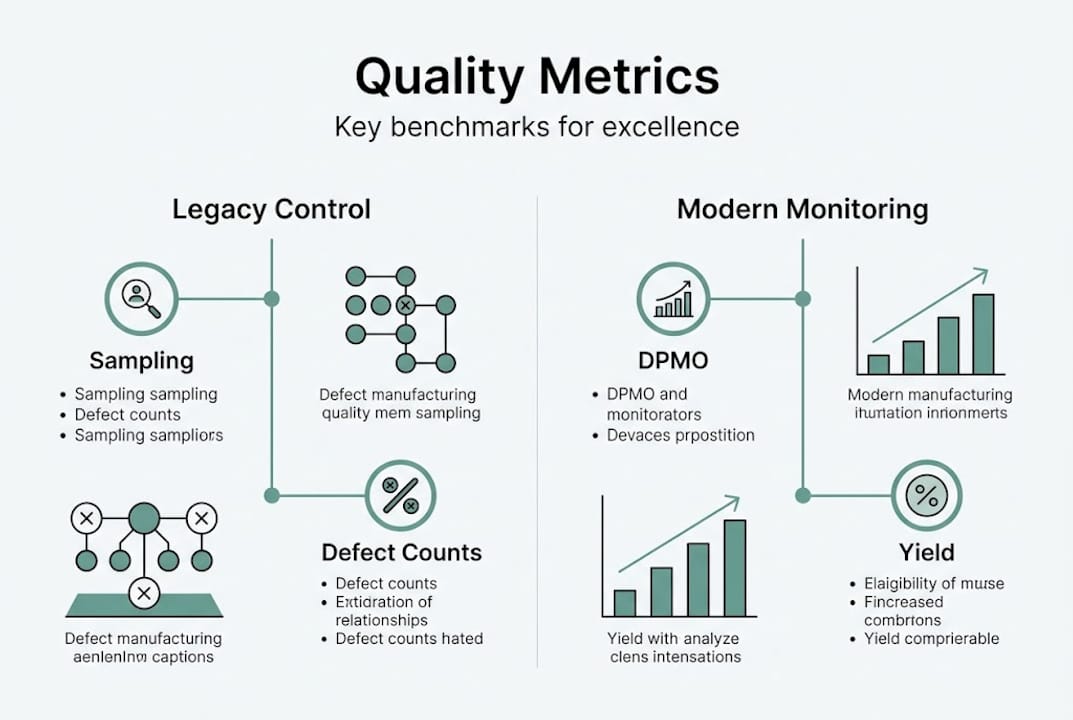

The difference is substantial. Legacy quality control relies on fixed control limits and periodic sampling. If a process drifts gradually, it can breach acceptable limits before the next sample catches it. Modern continuous monitoring tracks every cycle, identifies complex interactions between variables, and flags process drift early enough to intervene.

Here is a direct comparison of the two approaches:

| Characteristic | Legacy quality control | Modern continuous monitoring |

|---|---|---|

| Timing | Post-production or periodic | Real-time, every cycle |

| Method | Sampling and fixed thresholds | Continuous data streams and adaptive thresholds |

| Defect detection | After the fact | Early warning during production |

| Data volume | Limited samples | Full process data |

| Improvement basis | Retrospective audits | Live analytics and ML-driven insights |

The benefits of adaptive, data-driven monitoring go beyond defect counting. When your system learns what normal looks like across dozens of variables simultaneously, it can detect the subtle interactions that precede failure. Fixed-threshold approaches miss these entirely. Understanding quality for operational excellence means recognising that the most expensive defects are the ones that were technically preventable.

This evolution also applies across process types. Whether you are managing quality control for CNC machining or complex assembly operations, the move from inspection to prediction is now a practical option, not a future aspiration.

With an appreciation for the evolution of quality monitoring, let’s explore its quantifiable benefits for manufacturing output and efficiency.

Continuous monitoring has a direct and measurable effect on two of the most important production metrics: waste and throughput. When quality signals are linked across upstream and downstream stages, failures that would otherwise travel undetected through the line are caught early. The result is fewer rework loops, less scrap, and a more stable production flow.

The Dana case study illustrates this clearly. Dana, a global automotive component manufacturer, implemented multi-stage real-time analytics across its plants and saw First Time Through (FTT) rise from 80% to 98%, with significant improvements in final assembly throughput. This was not a marginal gain. It represented a structural change in how quality issues were detected and resolved.

| Metric | Before monitoring | After monitoring | Change |

|---|---|---|---|

| First Time Through (FTT) | 80% | 98% | +18 percentage points |

| Failure wave frequency | High | Significantly reduced | Major reduction |

| Final assembly throughput | Baseline | Improved | Measurable uplift |

Linking upstream and downstream quality signals is particularly powerful for injection moulding quality control and other multi-stage processes where defects in early stages compound later. When those signals are integrated, you can trace the root cause rather than treating each symptom separately.

To turn monitoring data into strategic action, follow these steps:

“Our FTT jumped from 80% to 98% after implementing multi-facility ML analytics. The value was not just in catching defects but in understanding why they occurred across plants.”

Pro Tip: If you operate multiple facilities, link their monitoring data into a single view. Recurring quality issues that appear at one plant often originate from upstream suppliers or shared process parameters. Multi-plant visibility is where the real efficiency gains are unlocked. You can explore the real-time efficiency impact of this approach in more detail, and review MES tools for efficiency that support cross-facility integration.

Understanding what quality monitoring can accomplish means nothing without tackling its practical implementation requirements.

Dependable monitoring starts with robust measurement systems. Before you can trust data from sensors or vision systems, you need to confirm that the measurement process itself is reliable. This means calibration, traceability, and error-proofing at the measurement stage. A well-designed Measurement System Analysis (MSA) confirms whether your gauges and sensors can distinguish real process variation from measurement noise.

The Bosch plant in the Czech Republic demonstrated how far this matters. After implementing machine vision with a properly designed inspection setup, their error catch rate improved from 85% to between 99% and 100%. The result did not come from the technology alone. It came from tailored inspection design and a reliable underlying measurement system.

Beyond measurement systems, the physical environment plays a significant role. Factors to address before deployment include:

Digital traceability also adds strategic value beyond compliance. When every part carries a digital record of the conditions under which it was produced, you gain the ability to reconstruct the process history for any failure. This is particularly relevant for automotive and medical device manufacturers facing strict regulatory standards. Reviewing quality control tips specific to your production type helps you prioritise implementation actions in the right order.

Pro Tip: Engineer your inspection workstations before installing sensors, not after. Addressing lighting, contamination, and ergonomics in advance reduces false readings and operator workarounds. A strong reference point is CNC measurement system success, which outlines how measurement environment design underpins reliable data.

Once robust monitoring is in place, it’s vital to measure its performance with clear, industry-standard benchmarks.

Three metrics sit at the core of quality monitoring performance measurement: DPMO, yield, and sigma level. Each gives you a different view of how your process is performing and where improvement is needed.

DPMO stands for Defects Per Million Opportunities. It counts the number of defects relative to the total number of chances for a defect to occur. This normalises quality across processes with different complexity levels, making it possible to compare a simple stamping operation with a complex sub-assembly.

Yield is the percentage of units that complete a process stage without defects. First-pass yield and rolled throughput yield (RTY) give you different perspectives on where losses occur across a multi-stage line.

Sigma level ties these two together. A process operating at 3.4 DPMO is performing at Six Sigma, the near-perfect standard that most manufacturers use as an aspirational target.

| Sigma level | DPMO | Yield (%) |

|---|---|---|

| 3 Sigma | 66,807 | 93.32% |

| 4 Sigma | 6,210 | 99.38% |

| 5 Sigma | 233 | 99.977% |

| 6 Sigma | 3.4 | 99.9997% |

These benchmarks underpin improvement targets by giving you a consistent language to compare current performance against industry standards. They also make it possible to set realistic improvement goals rather than aspirational ones. To establish actionable monitoring goals using these metrics:

You can explore defect rate benchmarks relevant to your sector and pair them with predictive analytics for quality to set targets that are both ambitious and achievable.

With the technical roadmap mapped out, it is worth stepping back to reflect on what separates true quality leaders from the rest.

Most plants treat quality monitoring as a compliance function. They count defects, report the numbers, and close the loop. That approach satisfies auditors but leaves enormous value on the table. The real breakthrough comes from using monitoring as a live adaptive control system, not a scorecard.

The hardest part of this shift is distinguishing genuine process drift from statistical noise. Monitoring systems that generate excessive false alarms train operators to ignore alerts, which defeats the entire purpose. Managing signal versus noise through adaptive and predictive AI is what separates a monitoring installation that delivers results from one that creates alert fatigue.

Organisations that treat monitoring data as a strategic analytics platform drive outsized improvements because they invest in learning from rare events, not just tracking common ones. A single unusual failure pattern, properly analysed, can reveal a systemic issue that saves millions. That only happens when your monitoring infrastructure is designed to surface those signals, not bury them under routine data.

For teams ready to elevate their monitoring and operational performance, here’s how you can take the next step.

Mestric’s platform is designed to support every dimension of modern quality monitoring, from real-time KPI tracking to AI-powered process optimisation. Whether you are building a monitoring programme from scratch or scaling an existing setup, our tools give you visibility and control across your entire production environment.

Explore our production quality monitoring solutions to see how connected machinery and live dashboards support practical quality improvement. You can also compare approaches in our guide on MES vs traditional manufacturing or follow our step-by-step quality guide to build a structured implementation plan for your facility.

Quality monitoring is continuous real-time tracking and analysis designed to prevent defects, while quality control typically checks finished goods against a compliance standard. The distinction is timing: monitoring acts during production, control acts after it.

It reduces waste, minimises rework, and stabilises throughput by enabling immediate intervention before failures spread across the line. Dana’s FTT rose from 80% to 98% using this approach, demonstrating the scale of impact possible.

They detect subtle process changes and learn from data rather than relying on fixed thresholds that miss gradual drift. Managing signal versus noise through predictive AI is what prevents alert fatigue and keeps monitoring systems effective.

DPMO stands for Defects Per Million Opportunities and provides a normalised benchmark for comparing process quality across different operation types. The Six Sigma target of 3.4 DPMO is the industry standard for near-perfect quality performance.